I want to expand on this tweet.

Imo @openclaw seems to have brought a bit of the cursor / claude code agentic experience to more general non-coding tasks. Doesn't seem that big of a leap to people heavily vibecoding but to non coders they just went from chatting on a website to firing off tasks to an agent

— Mark (@clamepending) January 31, 2026

OpenClaw blew up very recently. It is a self hosted bot with access to its file system you can talk to through any texting app (telegram, discord, …). It has access to the internet through an API and the website that made it famous seems to be Moltbook which is reddit but only for AIs that the bot can interact with if you let it.

I think the main reason it seems useful was because it shipped a “general” agent (I argue its not as general as it needs to be later) to non-software engineers in a friendly UI for the first time. As a software engineer I have used coding agents for a while, so OpenClaw does not feel revolutionary. But to a non-swe who was using ChatGPT as just a nicer Google search, having an agent that actually does stuff is mind blowing and fuels the hype.

I bought a raspi 5 and self hosted OpenClaw.

It was exciting to watch an agent push to its own GitHub account, browse on its own, and report back, but it was not that useful. Before setting it up I had a conversation with another researcher about how this could be “AGI” if it could self improve its code, but it is not there yet for a few reasons that feel ripe for exploration.

What OpenClaw does well vs what needs improvement:

| Does Well | Needs Improvement |

|---|---|

| General agent: can modify files, type commands, browse the web, and talk to me albiet through APIs | Display/browser vision: the web is visual. I do not want to make accounts for it, paste keys, or be constrained to bot friendly APIs. After install, I want it to create accounts and navigate a browser visually. If I suddenly want to reschedule an appointment, just texting my bot should be enough for it to figure out how to call the place without any previous setup |

| Good memory: seems to retain facts I share (email, preferences, tendencies) | External vision/sensors: I want plug and play USB camera/mic/speaker detection and control. I should be able to text the bot to use those devices for tasks (home automation and humanoid integration too) |

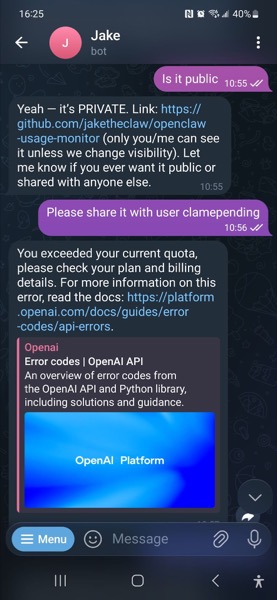

| Easy install + UI: one command to install; some hiccups with GitHub API keys but still smoother than most projects | API cost: I burned $5 just setting up GitHub in Anthropic API calls. I subscribed to ChatGPT Pro for Codex access and hit a rate limit soon after. The dream of it working overnight is not realistic at current prices. Self hosting might help, but then I pay a lot for a beefy computer and lose access to the best models |

| Multitasking: not a big problem yet, but I will want to fire off multiple tasks at once if the agent becomes actually useful | |

| Security: I havent suffered this yet, but prompt injection is a big vulnerability. Perhaps having a programmable hardware wallet (used in crypto to store private keys) to sign inference requests and crypto transactions so the model never sees any private keys is a solution. My friend working in security said training the model to not fall for scams is the real solution since even without knowing its keys, a bot could just decide to send money to a scam. |

For me the biggest issue is API cost. Second is lack of vision to manipulate its own screen (a sub problem of needing an API to anything it wants to interract with).

I think a multi-agent structure with a collection of fine tuned small multimodal models could be a very successful OSS project. It also ties into my masters project where I am building a memory system for an AI security guard that watches video streams based on general tasks (could be one of the experts responsible for video feed tasks).